Structured tools, cleaner workflows.

Connect external services via MCP. The model can act, not just answer. Learn about MCP →

Run local models, use 15 native tools, attach photos for OCR, connect MCP workflows — all on-device, all offline-capable.

$2.99 one-time. No subscriptions. No cloud required.

$ solollm status

models 13 local (Gemma 4, Llama, Qwen…)

tools 15 built-in (OCR, PDF, web, code…)

privacy no cloud, no accounts, no telemetry

price $2.99 one-time ▮

Why SoloLLM

Most local AI apps stop at chat. SoloLLM adds native tools, persistent history, model choice, and exportable work.

Early Testers

Feedback from beta testers and early adopters — not App Store reviews.

Built-in Tools

PDF, documents, web, code, OCR, translation, clipboard, and Siri — all built in, all on-device.

MCP + Extensibility

MCP support gives developers structured tool connectivity without making the whole product feel like a dev dashboard.

Connect external services via MCP. The model can act, not just answer. Learn about MCP →

Technical depth exists when you want it. The interface stays simple for everyday use.

Privacy

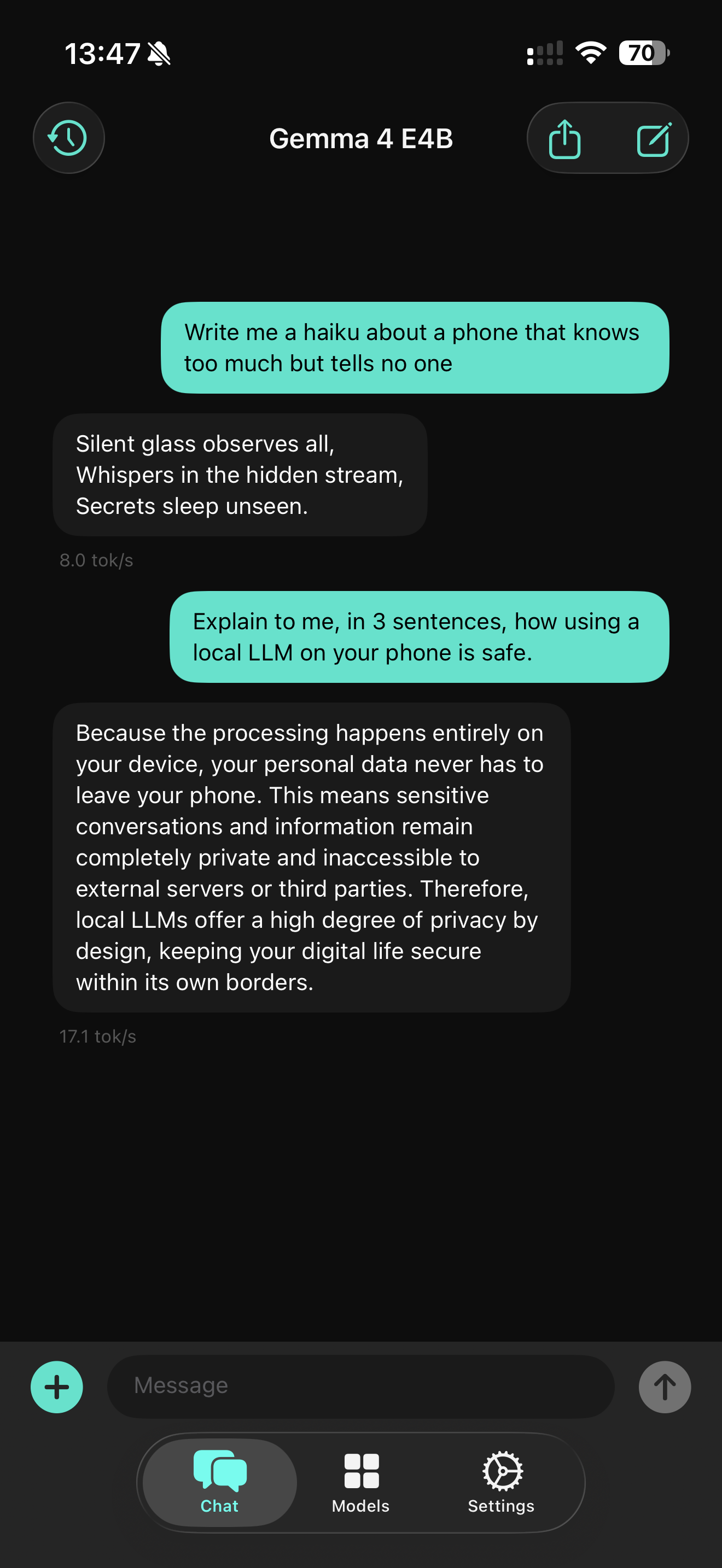

For anyone looking for offline AI on iPhone or a private ChatGPT alternative — this is built for that.

Models

Speed for quick tasks, stronger reasoning when you need depth. All run locally, all included.

$2.99 one-time. 13 models, 15 tools, no subscriptions. Works offline.